Press Releases

Beyer, Lawler, Jacobs Introduce Bipartisan Legislation to Promote AI Foundation Model Transparency

Washington,

March 26, 2026

Tags:

Science and Innovation

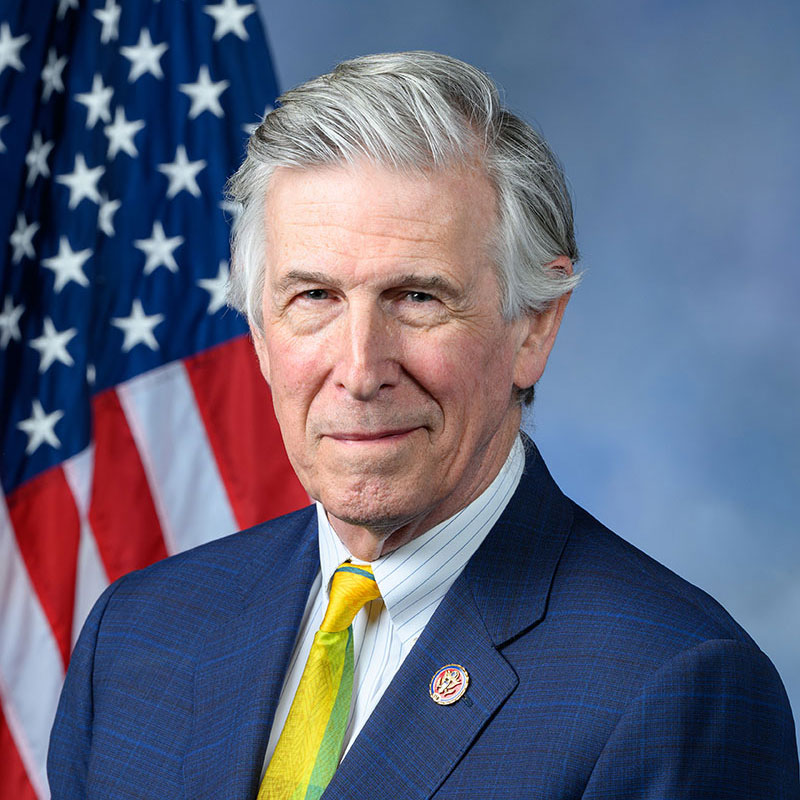

U.S. Representatives Don Beyer (D-VA), Mike Lawler (R-NY), and Sara Jacobs (D-CA) today introduced the AI Foundation Model Transparency Act, landmark bipartisan legislation to establish transparency requirements for how artificial intelligence (AI) foundation models are built, trained, and deployed. Foundation models are trained on vast and diverse datasets and power many of our generative AI tools, including chatbots like ChatGPT, Claude, Gemini, and Grok. Despite the growing influence of these tools, information about what data these models are trained on or how these models have been trained and tested is generally not available to the public. These models can produce inaccurate, imprecise, or biased responses due to limitations/biases in the model’s training data or how the model was trained. This can have adverse real-world impacts when models are used in high-impact areas including health-related AI inferences, loan granting, federal grant approval, housing approval, or law enforcement. The AI Foundation Model Transparency Act would direct the Federal Trade Commission (FTC) — in consultation with the National Institute of Standards and Technology (NIST), the Secretary of Commerce, and the Office of Science and Technology Policy (OSTP) — to set standards for what information high-impact foundation models must provide to the FTC and what information they must make available to the public. Information identified for increased transparency would include a sufficiently detailed summary of training data used, how the model is trained, and whether user data is collected during use. The information provided in this bill would both be useful for consumer protection, and for artists and creators who otherwise have very limited insight into training data — addressing widespread concerns about AI from businesses and individuals alike. “Artificial intelligence foundation models commonly described as a ‘black box’ do not inherently give consumers the tools to understand why a model gives a particular response. Giving users more information about the model—how it was built and what background information it bases its results on—would greatly increase transparency,” said Rep. Don Beyer. “This bill would help users determine if they should trust the model they are using for certain applications, and help identify limitations on data, potential biases, or misleading results. When a model’s bias could lead to harmful results like rejections for housing or loan applications, or faulty medical decisions, the importance of this reform becomes clear and very significant.” “This is about accountability and getting ahead of a rapidly evolving technology before it outpaces common-sense guardrails,” said Rep. Mike Lawler. “As the general public interacts with AI every day, whether they realize it or not, Americans deserve to know how these systems are built, what data is being used, and where the risks are. Transparency is the foundation for trust, and if we’re going to lead on innovation here in the United States, we also have to lead on protecting consumers, safeguarding our national security, and making sure this technology is used responsibly.” “Trust will decide the global AI race – separating the countries and developers that earn it from those that don’t. That’s why I’m proud to co-lead the AI Foundation Model Transparency Act, which will help build trust in AI by requiring clear, upfront information about how foundation models are trained, tested, and operated,” said Rep. Sara Jacobs. “Transparency is the first step toward detecting and addressing potential harms, assigning responsibility, and building confidence in systems that are rapidly shaping our lives as well as our economy and national security.” The AI Foundation Model Transparency Act would:

The legislation has been endorsed by Americans for Responsible Innovation, SAG-AFTRA, and Mental Health America. Text of the AI Foundation Model Transparency Act is available here. Rep. Don Beyer (D-VA) serves as co-Chair of the Congressional Artificial Intelligence Caucus. He was one of a handful of members selected to serve on the bipartisan Task Force On Artificial Intelligence, convened by House Democratic Leader Hakeem Jeffries and Speaker Mike Johnson. He is the author of the GUARDRAILS Act and a lead cosponsor of the CREATE AI Act, the Federal Artificial Intelligence Risk Management Act, and the Artificial Intelligence Environmental Impacts Act. Beyer previously served for eight years on the House Committee on Science, Space, and Technology, and is currently attending George Mason University as a part time student pursuing a master’s degree in machine learning, in part to help inform his work on AI in Congress. |